8 Key Facts About NVIDIA Spectrum-X and MRC: Powering the World's Largest AI Factories

The race to build the most powerful AI factories demands networking that can keep up with the relentless growth of AI models. NVIDIA Spectrum-X Ethernet, now enhanced with Multipath Reliable Connection (MRC), has emerged as the leading solution for gigascale AI deployments. Here are eight essential insights into how this open, AI-native fabric is setting new standards for performance, resilience, and scale.

- 1. What Makes Spectrum-X the Backbone of AI Factories

- 2. Industry Leaders Trust Spectrum-X for Mission-Critical AI

- 3. MRC: The Protocol That Revolutionizes RDMA Transport

- 4. A Road Network Analogy for Understanding MRC

- 5. Proven Success: OpenAI’s Experience with MRC

- 6. Microsoft and Oracle Deploy MRC in the Largest AI Factories

- 7. Open Specification Through the Open Compute Project

- 8. Key Benefits: Load Balancing, Resilience, and Visibility

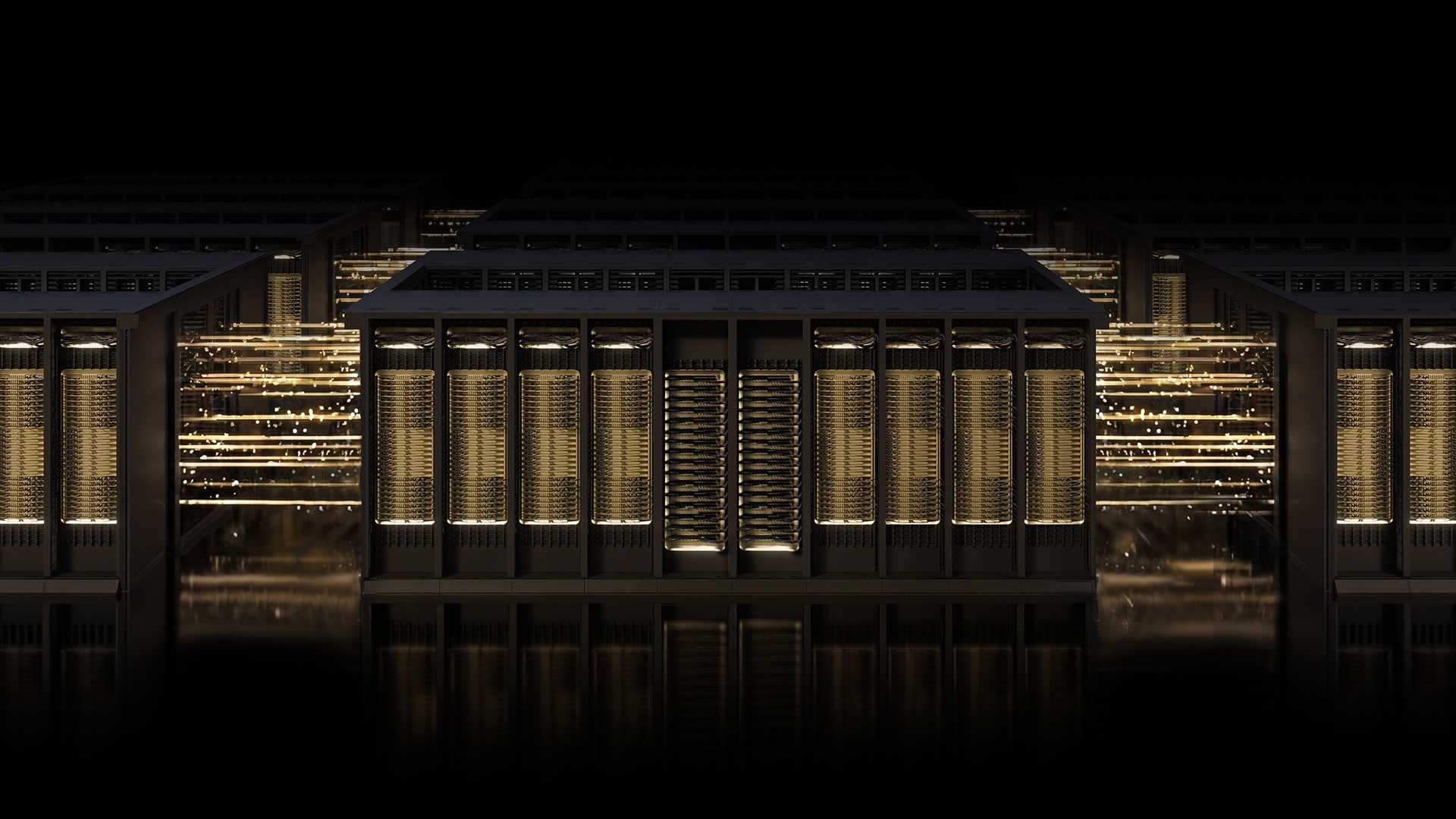

1. What Makes Spectrum-X the Backbone of AI Factories

NVIDIA Spectrum-X is not just another Ethernet fabric—it's purpose-built for the unique demands of AI workloads. Unlike traditional networking, AI training requires massive data throughput with minimal latency, especially as models scale to thousands of GPUs. Spectrum-X combines purpose-built hardware, deep telemetry, and intelligent fabric control to deliver the high performance and reliability that AI factories need. Its open, AI-native architecture ensures that data moves efficiently across the entire cluster, preventing bottlenecks that can stall training runs. This foundation allows organizations to build gigascale AI infrastructure with confidence, knowing their network can handle the most demanding computational tasks.

2. Industry Leaders Trust Spectrum-X for Mission-Critical AI

Some of the world's most advanced AI organizations rely on Spectrum-X. OpenAI, Microsoft, and Oracle are among the key adopters, deploying this networking technology in their largest AI factories. These companies face zero tolerance for downtime or performance degradation—any network hiccup could stall frontier model training for hours. By choosing Spectrum-X, they gain a proven, scalable infrastructure that supports their ambitious AI goals. Their commitment underscores the platform’s ability to deliver consistent throughput, low latency, and high availability even under extreme loads. For any organization building state-of-the-art AI, Spectrum-X represents the gold standard in networking.

3. MRC: The Protocol That Revolutionizes RDMA Transport

Multipath Reliable Connection (MRC) is an RDMA transport protocol that fundamentally changes how data flows across AI networks. In traditional setups, a single RDMA connection follows one path—much like a single-lane road. If that road gets congested, everything slows down. MRC breaks this limitation by allowing one RDMA connection to distribute traffic across multiple network paths simultaneously. This multipath approach dramatically improves throughput, load balancing, and availability. It’s a game-changer for large-scale AI training fabrics because it ensures that no single point of congestion can cripple performance. MRC was co-developed by NVIDIA, Microsoft, and OpenAI, combining deep expertise from hardware, software, and AI operations.

4. A Road Network Analogy for Understanding MRC

To grasp MRC’s impact, picture upgrading a single-lane road through a small town to a well-planned grid of streets, then adding a real-time traffic app. In the old system, any blockage—a stalled car, a traffic jam—brings travel to a halt. With MRC, multiple routes are always available, and the system dynamically reroutes around slowdowns or road closures. Traffic flows smoothly even when some paths experience trouble. This analogy captures how MRC ensures every GPU gets the bandwidth it needs throughout a training run, avoiding the idle time that would otherwise cripple large-scale AI production. It’s a smart, adaptive approach that keeps the entire fabric humming efficiently.

5. Proven Success: OpenAI’s Experience with MRC

OpenAI has already validated MRC in production. During the Blackwell generation, deploying MRC yielded tangible results. According to Sachin Katti, head of industrial compute at OpenAI, “MRC’s end-to-end approach enabled us to avoid much of the typical network-related slowdowns and interruptions and maintain the efficiency of frontier training runs at scale.” This real-world endorsement highlights how MRC reduces the impact of network issues that traditionally plague large AI clusters. For OpenAI, which runs some of the most compute-intensive workloads on the planet, MRC has become an essential tool for maintaining high GPU utilization and minimizing training interruptions.

6. Microsoft and Oracle Deploy MRC in the Largest AI Factories

Microsoft and Oracle have joined the MRC movement, integrating it into their largest AI data centers. Microsoft’s Fairwater facility and Oracle Cloud Infrastructure’s (OCI) Abilene data center are purpose-built for training and deploying cutting-edge frontier LLMs. Both rely on MRC to meet strict performance, scale, and efficiency requirements. This collaboration between NVIDIA, Microsoft, and OpenAI has been long-standing, focused on advancing AI infrastructure. By embedding MRC into their networks, these hyperscalers ensure that their AI factories can handle the immense demands of models with billions of parameters. Spectrum-X Ethernet provides the ideal foundation, delivering the reliability necessary for production-grade AI at massive scale.

7. Open Specification Through the Open Compute Project

After proving its worth in production on NVIDIA Spectrum-X hardware, MRC has been released as an open specification through the Open Compute Project (OCP). This move democratizes access to advanced multipath RDMA capabilities, allowing the broader industry to benefit. The open specification includes the set of rules that govern how data moves between systems, ensuring interoperability and fostering innovation. By contributing MRC to OCP, NVIDIA and its partners aim to accelerate adoption and standardize high-performance networking for AI. This openness aligns with the community’s desire for collaborative, standards-based solutions that drive the entire ecosystem forward.

8. Key Benefits: Load Balancing, Resilience, and Visibility

MRC delivers several concrete advantages for AI training. First, it achieves high GPU utilization by load-balancing traffic across all available paths, ensuring every GPU gets the bandwidth it needs throughout a job. Second, even under congestion, MRC dynamically avoids overloaded paths, sustaining high bandwidth. When data loss occurs, intelligent retransmission enables rapid, precise recovery, minimizing the impact of short-lived interruptions on long-running jobs. Finally, administrators gain fine-grained visibility and control over traffic paths, simplifying operations and accelerating troubleshooting. These capabilities combine to reduce GPU idle time, boost overall efficiency, and make large-scale AI training more predictable and manageable.

The combination of NVIDIA Spectrum-X Ethernet and MRC represents a significant leap forward for AI networking. By leveraging purpose-built hardware, intelligent protocols, and open collaboration, this technology enables the world’s largest AI factories to operate with unprecedented performance and reliability. As AI models continue to grow in size and complexity, the need for robust, scalable networking will only increase. Spectrum-X and MRC are setting the standard today, ensuring that tomorrow’s AI breakthroughs have the infrastructure they deserve.