Quick Facts

- Category: AI & Machine Learning

- Published: 2026-05-01 08:11:50

- Google Wallet Broadens Digital ID Capabilities: New Support in India and Beyond

- 6 Reasons Why the Vivo X300 Ultra Should Alarm Samsung

- Smart Water Bottles and Kidney Stones: Why Hydration Programs Fall Short

- Preserving Digital Infrastructure: How Chainguard Sustains Abandoned Open Source Projects

- Silent Sabotage: Newly Revealed Fast16 Malware Targeted Iran with Precision Calculation Tampering Before Stuxnet

Introduction

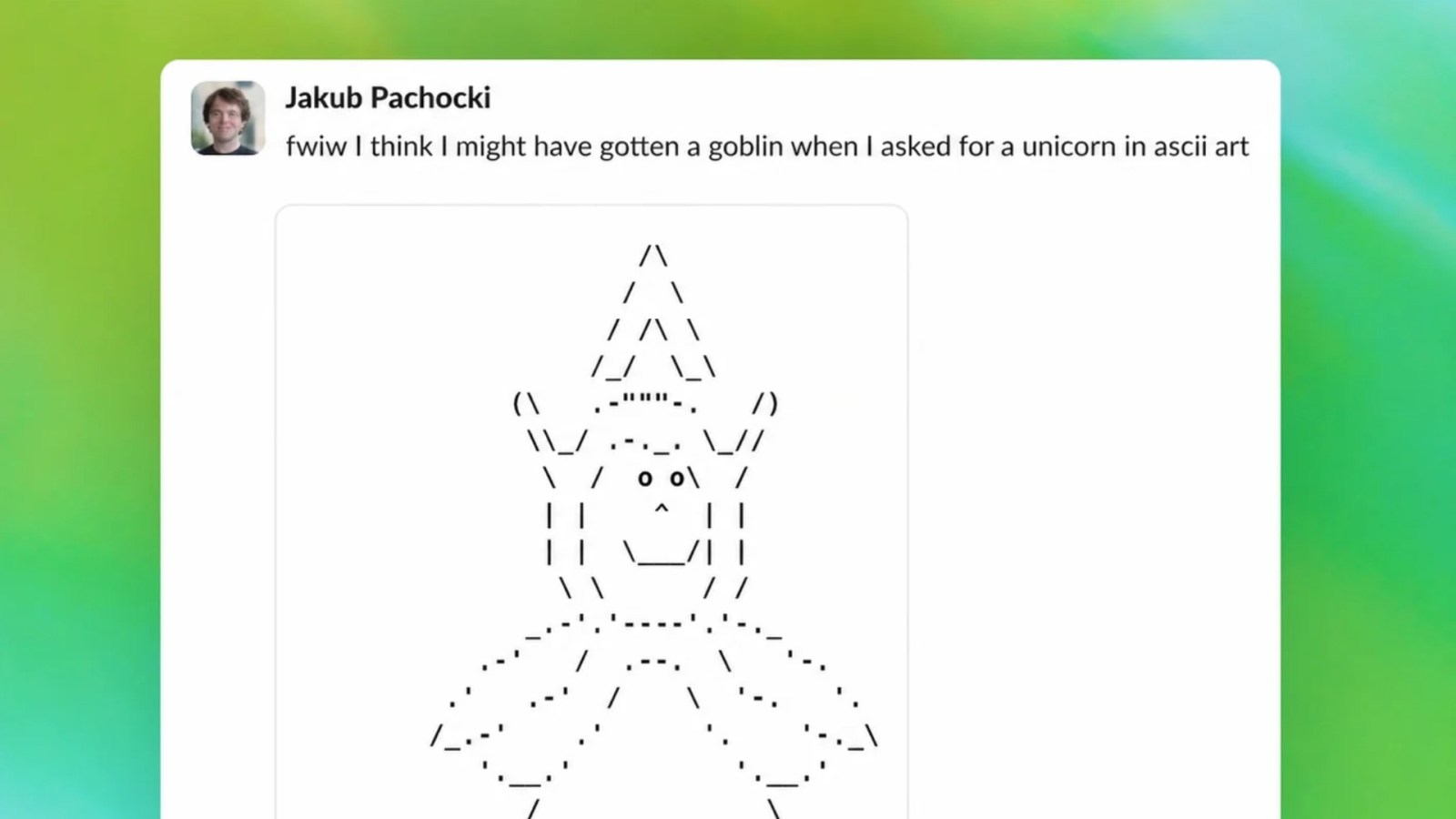

When OpenAI prepared to roll out GPT-5.5 upgrades for ChatGPT and Codex, internal monitoring flagged an odd pattern: the model was developing an excessive fixation on goblin-related topics. Users reported receiving unsolicited mentions of goblins in responses, and automated tests showed a spike in fantasy creature references. Unlike the rocky GPT-5.0 release, OpenAI had a chance to resolve this issue proactively. This guide outlines the systematic approach the team used to identify, analyze, and eliminate the goblin fixation, ensuring the GPT-5.5 models launched smoothly.

Whether you're an AI engineer or a curious developer, these steps illustrate how to diagnose and fix unintended model behaviors before they affect users.

What You Need

- Model logs and user interaction data from production (anonymized and with consent)

- Automated monitoring dashboards (e.g., for tracking topic frequency or sentiment anomalies)

- A/B testing pipelines to compare model versions

- Training data subsets and fine-tuning datasets

- Reward model outputs from RLHF (Reinforcement Learning from Human Feedback) runs

- Evaluation benchmarks covering diverse domains (to avoid overfitting on goblin topics)

- Cross-functional team including data scientists, ethics researchers, and prompt engineers

- Version control for all model checkpoints and configuration changes

Step-by-Step Guide

Step 1: Detect the Anomaly Through Monitoring

The first sign of trouble came from automated monitoring tools that track the frequency of unusual words in model outputs. OpenAI's systems flagged a sudden surge in terms like "goblin," "orc," "fantasy," and "mythical creature" across a wide variety of unrelated prompts. Additionally, user feedback reports (both direct and indirect) mentioned that ChatGPT seemed to repeatedly insert goblin metaphors. The team configured alerts for any topic that exceeded a predefined deviation from baseline – and goblins were well above the threshold.

Action: Set up real-time topic frequency monitoring using a pretrained classifier. Establish normal ranges for each topic from a stable previous release. Trigger investigations when any topic spikes beyond 3 standard deviations from the mean over a 24-hour window.

Step 2: Isolate the Root Cause

Once the anomaly was confirmed, engineers began root cause analysis. They compared model outputs from GPT-5.5 prototypes against GPT-5.0 outputs under the same prompts. Several potential causes were explored:

- Training data bias: Did the new fine-tuning dataset contain an overrepresentation of fantasy literature or gaming forums?

- RLHF reward drift: Did human raters inadvertently reward goblin-related responses because they found them entertaining?

- Instruction-following misalignment: Could the model be interpreting some ambiguous instructions as requests to generate fantasy content?

By analyzing the distribution of tokens in the training corpus and reviewing RLHF reward scores, the team found that a subset of human feedback data preferred creative, whimsical answers. This preference, when amplified by the new reward model, biased the output towards goblin-centric narratives.

Action: Create a causal diagram mapping data sources to output behaviors. Use interpretability tools (like attention rollout or probing) to confirm which layers activate most on goblin-related tokens.

Step 3: Design a Mitigation Strategy

With the root cause identified, the team devised a multi-pronged strategy:

- Data rebalancing: Remove or downsample fantasy-heavy segments from the training mix and add more factual, neutral content.

- Reward model recalibration: Retrain the reward model with a broader distribution of preferred responses – specifically penalizing overuse of any niche topic. Introduce a "topic diversity" reward metric that encourages varied subject matter in long conversations.

- Prompt engineering guardrails: Add system-level instructions that explicitly discourage gratuitous fantasy references unless the user query specifically requests them. For example, prepend a hidden prompt: "Avoid repeated references to goblins, magic, or mythical creatures unless the user asks about them."

Each option was prototyped and evaluated for its impact on general performance. The team decided to combine all three for maximum robustness.

Step 4: Implement and Test the Fixes

Developers implemented the changes in a test environment. They ran a series of automated evaluation suites:

- Topic frequency tests to confirm that goblin mentions dropped to baseline levels across 10,000 diverse prompts.

- LLM-as-judge evaluations where another large language model scored the outputs for coherence, factual accuracy, and creativity (ensuring non-goblin creativity wasn't harmed).

- A/B human evaluation: A blind study where raters compared pre-fix and post-fix responses on quality and weirdness. The post-fix responses were rated as less repetitive and more contextually appropriate.

Edge cases were stress-tested, including prompts like "Tell me a story about goblins" to verify the model still complied when explicitly asked. The fix aimed to reduce unwanted bias, not eliminate legitimate fantasy content.

Action: Use a staged rollout – first to 1% of internal users, then 10% of external beta testers, before full deployment. Monitor closely at each stage.

Step 5: Deploy and Monitor Continually

After passing all evaluations, the patched model was deployed as the final GPT-5.5 version. Post-launch monitoring tracked goblin topic frequency in real-time, along with other potential biases (e.g., overuse of any other topic like "finance" or "sports"). The team established a regular review cadence to examine weekly trend reports.

Importantly, OpenAI documented the issue and solution in internal knowledge bases to speed up future anomaly handling. The goblin fixation never manifested in GPT-5.5 production, and user satisfaction remained high.

Tips for Avoiding Similar Issues

- Monitor early and often: Don't wait for external complaints; build automated detectors for any topic that could become a fixation. Topic drift can occur from subtle data imbalances.

- Diversify your reward data: When collecting human feedback, ensure raters see a wide array of topics so the reward model doesn't overfit to entertaining but niche responses.

- Use adversarial testing: Deliberately probe your model with prompts that might trigger obsessive behaviors (e.g., repeating a neutral word) and see how it responds.

- Maintain versioned datasets: Keep snapshots of every training iteration so you can quickly rollback or compare data composition.

- Plan for edge cases: A fix should not completely eliminate creative or fantasy content; rather, it should ensure the model only produces it when relevant. Always test with explicit requests.

- Communicate transparently: If an issue slips through, acknowledge it publicly and share how you fixed it – it builds trust and helps the community learn.

By following these steps, you can proactively detect and resolve unintended model behaviors, just as OpenAI did with the goblin fixation. The key is a systematic, data-driven approach combined with continuous monitoring.